Concerning AI | Existential Risk From Artificial Intelligen…

Concerning AI | Existential Risk From Artificial Intelligen…

Episodes of Concerning AI

Mark All

Or do we? http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0070-2018-09-30.mp3

Ted interviews Jacob Ward, former editor of Popular Science, journalist at many outlets. Jake’s article about the book he’s writing: Black Box Jake’s website JacobWard.com Implicit bias tests at Harvard We discuss the idea that we’re currently

We love the OpenAI Charter. This episode is an introduction to the document and gets pretty dark. Lots more to come on this topic!

http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0066-2018-04-01.mp3

There’s No Fire Alarm for Artificial General Intelligence by Eliezer Yudkowsky http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0065-2018-03-18.mp3

We discuss Intelligence Explosion Microeconomics by Eliezer Yudkowsky http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0064-2018-03-11.mp3

http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0062-2018-03-04.mp3

Some believe civilization will collapse before the existential AI risk has a chance to play out. Are they right?

Timeline For Artificial Intelligence Risks Peter’s Superintelligence Year predictions (5% chance, 50%, 95%): 2032/2044/2059 You can get in touch with Peter at HumanCusp.com and Peter@HumanCusp.com For reference (not discussed in this episode):

SpectreAttack.com http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0059-2018-01-14.mp3

There are understandable reasons why accomplished leaders in AI disregard AI risks. We discuss what they might be. Wikipedia’s list of cognitive biases Alpha Zero Virtual Reality recorded January 7, 2017, originally posted to Concerning.AI http

If the Universe Is Teeming With Aliens, Where is Everybody? http://traffic.libsyn.com/friendlyai/ConcerningAI-episode-0057-2017-11-12.mp3

Julia Hu, founder and CEO of Lark, an AI health coach, is our guest this episode. Her tech is really cool and clearly making a positive difference in lots of people's lives right now. Longer term, she doesn't see much to worry about.

Ted had a fascinating conversation with Sean Lane, founder and CEO of Crosschx.

We often talk about how know one really knows when the singularity might happen (if it does), when human-level AI will exist (if ever), when we might see superintelligence, etc. Back in January, we made up a 3 number system for talking about ou

Great voice memos from listeners led to interesting conversations.

We continue our mini series about paths to AGI. Sam Harris’s podcast about the nature of consciousness Robot or Not podcast See also: 0050: Paths to AGI #3: Personal Assistants 0047: Paths to AGI #2: Robots 0046: Paths to AGI #1: Tools http:/

Rodney Brooks article: The Seven Deadly Sins of Predicting the Future of AI

3rd in a series about future of current narrow AIs.

Read After On by Rob Reid, before you listen or because you listen.

This is our 2nd episode thinking about possible paths to superintelligence focusing on one kind of narrow AI each show. This episode is about embodiment and robots. It's possible we never really agreed about what we were talking about and need

For show notes, please see https://concerning.ai/2017/08/29/0048-ai-xprize-and-thrival-festival-special-mini-episode/

How might we get from today's narrow AIs to AGI? This episode focus is tools.

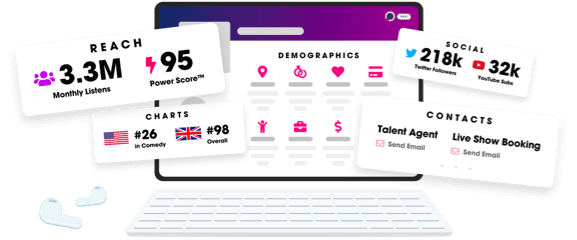

Unlock more with Podchaser Pro

- Audience Insights

- Contact Information

- Demographics

- Charts

- Sponsor History

- and More!

- Account

- Register

- Log In

- Find Friends

- Resources

- Help Center

- Blog

- API

Podchaser is the ultimate destination for podcast data, search, and discovery. Learn More

- © 2025 Podchaser, Inc.

- Privacy Policy

- Terms of Service

- Contact Us