Clement Bonnet discusses his novel approach to the ARC (Abstraction and Reasoning Corpus) challenge. Unlike approaches that rely on fine-tuning LLMs or generating samples at inference time, Clement's method encodes input-output pairs into a latent space, optimizes this representation with a search algorithm, and decodes outputs for new inputs. This end-to-end architecture uses a VAE loss, including reconstruction and prior losses.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments. Check out their super fast DeepSeek R1 hosting!

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. They are hiring a Chief Engineer and ML engineers. Events in Zurich.

Goto https://tufalabs.ai/

***

TRANSCRIPT + RESEARCH OVERVIEW:

https://www.dropbox.com/scl/fi/j7m0gaz1126y594gswtma/CLEMMLST.pdf?rlkey=y5qvwq2er5nchbcibm07rcfpq&dl=0

Clem and Matthew-

https://www.linkedin.com/in/clement-bonnet16/

https://github.com/clement-bonnet

https://mvmacfarlane.github.io/

TOC

1. LPN Fundamentals

[00:00:00] 1.1 Introduction to ARC Benchmark and LPN Overview

[00:05:05] 1.2 Neural Networks' Challenges with ARC and Program Synthesis

[00:06:55] 1.3 Induction vs Transduction in Machine Learning

2. LPN Architecture and Latent Space

[00:11:50] 2.1 LPN Architecture and Latent Space Implementation

[00:16:25] 2.2 LPN Latent Space Encoding and VAE Architecture

[00:20:25] 2.3 Gradient-Based Search Training Strategy

[00:23:39] 2.4 LPN Model Architecture and Implementation Details

3. Implementation and Scaling

[00:27:34] 3.1 Training Data Generation and re-ARC Framework

[00:31:28] 3.2 Limitations of Latent Space and Multi-Thread Search

[00:34:43] 3.3 Program Composition and Computational Graph Architecture

4. Advanced Concepts and Future Directions

[00:45:09] 4.1 AI Creativity and Program Synthesis Approaches

[00:49:47] 4.2 Scaling and Interpretability in Latent Space Models

REFS

[00:00:05] ARC benchmark, Chollet

https://arxiv.org/abs/2412.04604

[00:02:10] Latent Program Spaces, Bonnet, Macfarlane

https://arxiv.org/abs/2411.08706

[00:07:45] Kevin Ellis work on program generation

https://www.cs.cornell.edu/~ellisk/

[00:08:45] Induction vs transduction in abstract reasoning, Li et al.

https://arxiv.org/abs/2411.02272

[00:17:40] VAEs, Kingma, Welling

https://arxiv.org/abs/1312.6114

[00:27:50] re-ARC, Hodel

https://github.com/michaelhodel/re-arc

[00:29:40] Grid size in ARC tasks, Chollet

https://github.com/fchollet/ARC-AGI

[00:33:00] Critique of deep learning, Marcus

https://arxiv.org/vc/arxiv/papers/2002/2002.06177v1.pdf

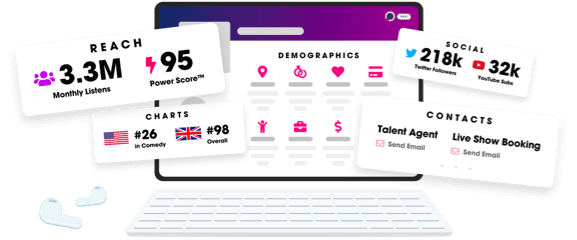

Unlock more with Podchaser Pro

- Audience Insights

- Contact Information

- Demographics

- Charts

- Sponsor History

- and More!

- Account

- Register

- Log In

- Find Friends

- Resources

- Help Center

- Blog

- API

Podchaser is the ultimate destination for podcast data, search, and discovery. Learn More

- © 2025 Podchaser, Inc.

- Privacy Policy

- Terms of Service

- Contact Us